- Measuring Growth

- Reports

- Accountability Reports

- School Reports

- District Reports

- Teacher Reports

- Comparison Reports

- Human Capital Retention Dashboard

- Roster Verification (RV)

- Getting Started

- Specifying Instructional Responsibility

- All Actions by Role

- All Actions for Teachers

- All Actions for School Administrators or Roster Approvers

- Manage teachers' access to RV

- Assign other school users the Roster Approver permission

- View a teacher's rosters

- Take control of a teacher's rosters

- Add and remove rosters for a teacher

- Copy a roster

- Apply a percentage of instructional time to every student on a roster

- Batch print overclaimed and underclaimed students

- Remove students from a roster

- Add a student to a roster

- Return a teacher's rosters to the teacher

- Approve a teacher's rosters

- Submit your school's rosters to the district

- All Actions for district admin or district roster approvers

- Assign other district users the Roster Approver permission

- Take control of a school's rosters

- View a teacher's rosters

- View the history of a teacher's rosters

- Edit a teacher's rosters

- Add and remove rosters for a teacher

- Copy a roster

- Apply a percentage of instructional time to every student on a roster

- Batch print overclaimed and underclaimed students

- Return a school's rosters to the school

- Approve rosters that you have verified

- Submit your district's rosters

- Understanding the RV Pages

- Viewing the History of Actions on Rosters

- Additional Resources

- Admin Help

- General Help

Teacher Diagnostic

Interpreting the Data

The purpose of the Teacher Diagnostic reports is to help teachers assess the growth of students at different achievement levels. These reports can help teachers and admins set priorities for improving the differentiation of instruction to meet the needs of students at all achievement levels.

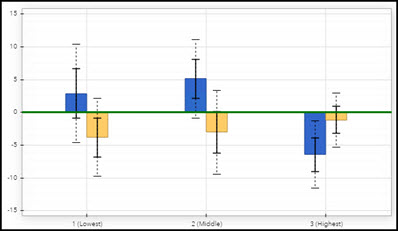

When the Teacher Diagnostic report opens, the data is displayed in a bar chart.

In this chart, the blue bars represent the growth of students in the most recent year. If you have data from the previous year, you'll see gold bars on the chart, as well. The gold bars represent the growth of students that this teacher had instructional responsibility for in the same subject or grade in the previous year. (The teacher has instructional responsibility for different students each year. Therefore, these are not the same students represented by the blue bar.) The green line represents expected growth. |  |

Each bar has solid and dotted black whiskers. The solid whiskers mark one standard error above and below the growth measure, and the dotted whiskers mark two standard errors above and below the growth measure. It's important to consider the standard error as you interpret the growth measures represented by the bars on the chart. Consider the overall pattern of the bars rather than focusing on any individual value. However, it can be helpful to keep these guidelines in mind.

| A bar that is at least one standard error above the line suggests that the group's average achievement level increased. If the bar is at least two standard errors above the line, the evidence of growth is even stronger. |  |

| Likewise, if the bar is at least one standard error below the green line, the group likely lost ground academically, on average. If the bar is at least two standard errors below the line, the evidence is stronger. |  |

| Regardless of whether the bar is above or below the green line, if it is within one standard error of the line, the evidence suggests the group's average achievement did not increase or decrease. |  |

Keep in mind that the percentage of instructional responsibility is factored only into the analysis that generates the growth measures on the value-added reports. In contrast, the percentage of instructional responsibility is not included in the calculation of the growth measures in the diagnostic reports. In the diagnostic reports, the growth measure for each group reflects the influence of all teachers who had instructional responsibility in the selected grade and subject or course.

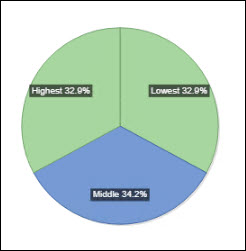

It can also be helpful to view the pie chart.

The size of each pie slice represents the percentage of students who were in each of the achievement groups. Students are placed into these groups based on where their achievement level in this subject falls in the state distribution, so a teacher's students might not be evenly distributed across the three groups. Teachers who serve a lower-achieving population will see more students in achievement group 1, whereas teachers with a large number of high-achievers will see more students in achievement group 3. It's possible for students at all achievement levels to demonstrate growth.

The slices of the pie are color-coded to indicate whether the group's average achievement level increased, decreased, or remained about the same compared to expected growth.

Diagnostic Color | Growth Measure Compared to Expected Growth | Interpretation |

|---|---|---|

Light Blue | At least one standard error above | Moderate but not significant evidence that the students made more growth than expected. |

Light Green | Between one standard error above and one standard error below | Evidence that the students made growth as expected. |

Light Red | More than one standard error below | Moderate but not significant evidence that the students made less growth than expected. |

White | N/A | Not enough students to generate a growth measure. |

As you reflect on these reports, you might want to ask yourself these questions:

- Did each group make enough growth to at least meet expected growth?

- Is there a difference in the amount of growth the groups made? For example, did the group of high-achievers make significantly more growth than the low-achievers?

- If there is a difference in the amount of growth across achievement groups, what factors might have contributed to the differences?

- How can this information inform instructional practices, strategies, and programs?